Pilot to Production: How Financial Institutions Put AI to Work Safely and at Scale

- Rahul Goel

- Nov 11

- 4 min read

From AI Hype to Accountable Execution

AI has become the buzzword of the decade in financial services. Every institution is experimenting - launching pilots, setting up innovation labs, and testing generative and predictive models.

Yet beneath the excitement lies a sobering truth: most AI initiatives never move beyond the pilot stage. The problem isn’t the technology. The underlying intelligence often works - most of the times impressively well. What fails is the translation from proof of concept to production, where real-world systems demand governance, compliance, security, and measurable ROI.

Without these, even the most promising prototypes remain stuck in innovation silos, detached from the metrics that actually drive business performance.

Financial institutions face a critical inflection point. To move past the hype, they must treat AI not as a lab experiment but as a core business capability - one that is accountable, explainable, and scalable. The winners in this next phase of AI adoption will not be those with the largest R&D budgets, but those that can turn experimentation into accountable execution.

The Abstraction Trap: Why Pilots Die Quietly

For many financial institutions, AI proofs of concept start with excitement and end in silence. They show potential in controlled environments - yet never make it to production. The challenge isn’t that the models fail; it’s that they exist in abstraction, disconnected from the complex realities of the financial ecosystem.

Integrating AI into live systems is not plug-and-play. It demands alignment with security protocols, governance frameworks, and regulatory controls. Banks must ensure that no financial data is exposed to the open internet, that all processing complies with privacy standards, and that every component of the AI workflow is auditable and explainable.

Unlike startups, financial institutions operate in one of the most controlled and risk-averse environments in the world. Every model must be vetted by risk, compliance, IT security, and enterprise architecture before touching production. Even when a model performs well in the lab, replicating that success securely and at scale can take months - sometimes years.

Without bridging these disciplines early - security, architecture, cost management, IT compliance, and regulation - even the most promising pilots die quietly in the abstraction trap.

The 90-Day Production Sprint: One Workflow Beats a Big Program

The most effective institutions focus on one problem, one team, and one measurable outcome at a time. A 90-day sprint forces discipline. It’s long enough to generate results, yet short enough to stay within quarterly cycles. This cadence mirrors how banks already manage performance and risk - making it a natural fit for enterprise AI deployment. The sprint model creates momentum.

Teams see progress quickly. Risk managers see compliance built in from day one. Executives see value before the next planning cycle.

When a project delivers measurable gains in speed, accuracy, or satisfaction in one quarter, AI stops being a proof of concept - and becomes a proof of trust. That’s what moves it from the lab to the leadership table.

Year-End Ready: What Real Proof Looks Like

Year-end readiness isn’t a roadmap or a slide deck - it’s evidence. Every institution claiming AI progress should be able to show five simple things:

1️⃣ A plain-language description of what the AI does and why it matters.

2️⃣ A before-and-after scorecard with clear business metrics.

3️⃣ Documented risks - and how they were mitigated.

4️⃣ At least one live, ROI-generating use case.

5️⃣ A plan for the next quarter built on verified success.

When AI can demonstrate this kind of proof, it stops being a technology story and becomes a business story. It shifts from initiative to asset in the eyes of senior leadership.

Built-In Oversight: Not Oversight at the End

In regulated environments, oversight must be part of delivery - not an afterthought. Too often, governance arrives at the end, creating bottlenecks and mistrust. Instead, monitoring, fallback plans, and explainability should be designed into solutions from the start.

Executives care more about whether AI outcomes can be traced, explained, and reversed when needed. By embedding oversight early, risk teams become collaborators - not gatekeepers. The result - Faster approvals, lower compliance friction, and more stable deployments.

A Playbook for Safe Scale

Moving AI from lab to live production requires more than technical excellence - it demands a disciplined framework that balances innovation with control. At BrainRidge Consulting, our “Safe Scale” playbook rests on five foundational pillars:

Start with Measurable Business Outcomes Define success upfront - fraud reduction, agent assist, operational excellence, or SDLC efficiency. Tie every experiment to a business KPI, not just a model metric.

Embed Governance and Oversight from Day One Build traceability and audibility into the design process. Establish clear ownership of decisions.

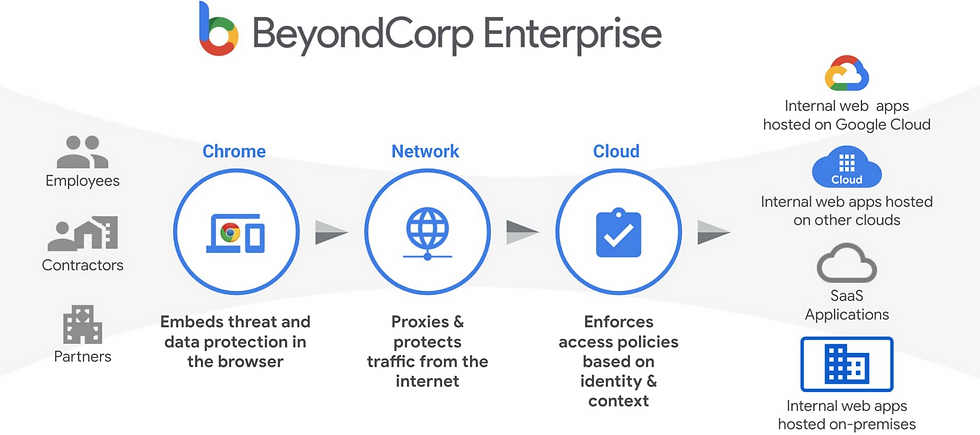

Secure Data by Design: Adopt a zero-trust approach to data. No data can leave the security boundaries.

Build for Integration, Not Isolation AI must plug into core systems and workflows. Collaboration with architecture, security, and governance teams is non-negotiable.

Balance Innovation with Cost Discipline AI scale must also be economic scale. Implement cost governance: monitor performance, optimize workloads, and retire low-value models.

Safe Scale Is a Mindset

Safe scale isn’t about slowing innovation - it’s about building AI programs that can withstand real-world complexity. Institutions that follow this playbook move faster precisely because they’ve embedded trust, security, and accountability into their foundations.

At BrainRidge Consulting, we call this shift “AI in labs” to “AI in life” - where models don’t just predict outcomes but produce measurable business impact, safely and at scale.

Trust Is the Shortcut to Scale

The AI gap in financial services isn’t technical - it’s operational and cultural. The institutions that will lead the next wave aren’t chasing every new model - they’re the ones that start small, prove fast, and embed oversight early. AI readiness isn’t about more tech. It’s about precision, discipline, and proof. Because when AI produces outcomes that executives can explain, auditors can verify, and customers can feel, it becomes a competitive advantage, not a compliance risk.

The next generation of financial leaders will measure AI success by how many AI implementations they can stand behind for long term with confidence. Because in the future of financial services, trust is the true accelerator of AI at scale.

Comments